Introduction to algorithmic dance research

________________________________________________________________________

The algorithmic revolution in artistic practices dates back to the 1950s and has drastically changed not only the way in which art is produced, but also the function and self-conception of its creators. Pioneer in algorithmic composition Karlheinze Essl argues on the subject that, “it is a method of perceiving an abstract model behind a sensual surface, or in turn, of constructing such a model in order to create aesthetic works (Essl, 2007: 107-8). The use of live-electronics and technology in dance has become an experimental approach that is often overlooked. Nevertheless, a small reevaluation has shown that since the 1990s technology-enhanced choreography proves to be a reliable resource to test the aesthetic range of movement vocabularies without interrupting the continuity in dancing. Early models are Laban Movement Methodology software (Life Forms 1989), which was further developed for choreographic purposes by Merce Cunningham (Biped 1999). Later, this software was introduced as a movement-tracking system in improvisation practices by William Forsythe (Improvisation Technologies 2000), and in research projects on dance cognition studies and AI/choreographic research (Choreographic Coding Labs, NEUROLIVE).

Since 2015, I create interdisciplinary solo and group performances that rely on technological enhancement. For examples, the random generative scores that are shown on monitors around the stage in Almosteverythinghappens (2016), the extension of the dancer’s body with microphones in an environment filled with controlled speakers in Extended Resonances (2017), and eventually the blueprint for this research, Solo for Nervous System (2018). These performances resulted from researching means to expand the possibilities of machine intelligence such as automatisms, random operations, rule-based systems, and autopoietic strategies for artistic research. What binds these choreographies are the way they reinterpret the open form composition. This composing method, developed by composer Earle Brown in the 1950s, was integrated in dance in the form of postmodern task-based choreographic strategies where the choice-making of dancers was based on their own intuition, judgment and abilities (Da Silva, 2010: 11). More specific information and recordings of the choreographies listed above can be found on my website (www.klaasdevos.eu) under the section ‘CHoRGRPH’.

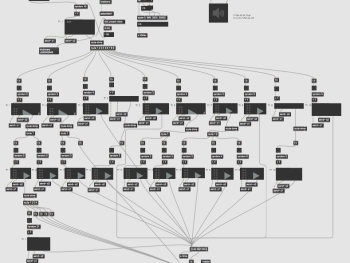

By integrating self-developed software in Nervous Systems, I experiment with the implicit knowledge production of personal and social habits and habitats of performers. In R.E.A.Ch., software is used as a data-management tool, but, and more importantly, also as a co-creative partner in artistic research. A partner that gives “access to a layer of reality we don't have conscious experiential access to. For example, it expands the consciously noticeable in improvisation by rendering lucid somatic information such as sweat and temperature through thermodynamic technology or allows us to experience and process movement in temporal frames that are too faster or too defragmented for the human to consciously engage with (Ernst, 2016). The technological blueprint of R.E.A.Ch. is a software that made for Solo for Nervous Systems, it produces real-time generated scores that trigger interactions between a dancer, the movements, the score, and the spectator. The software is developed using Max-msp, “a visual object-oriented programming language, initially designed for interactive musical performance and which is also suitable for digital signal processing as well as real-time control” (Essl, 2007: 123). In later stages, I hope to expand this software using other computing language (Python, C++) that are more suitable to further develop into learning algorithms as creative partners in improvised dance.

________________________________________________________________________